Abstract

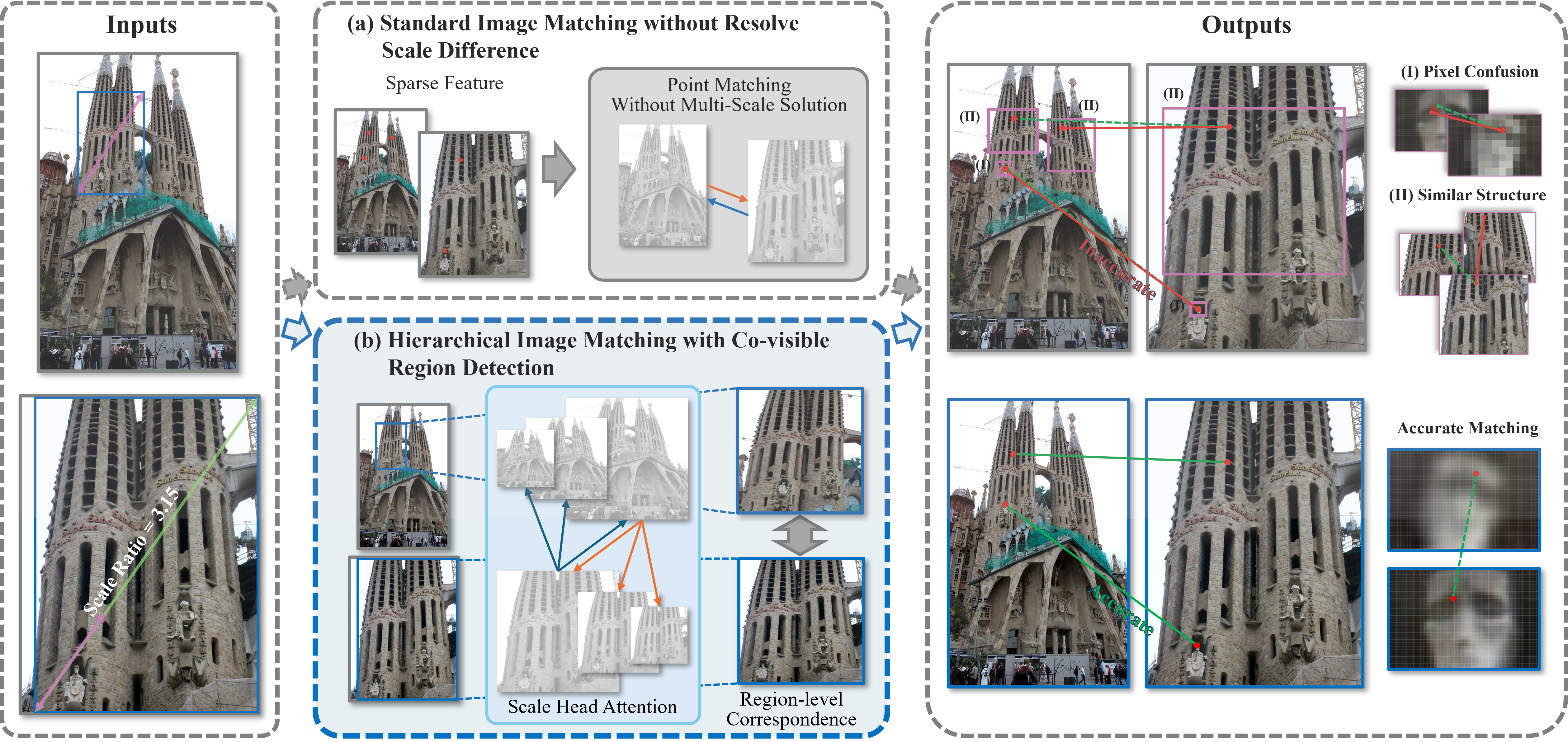

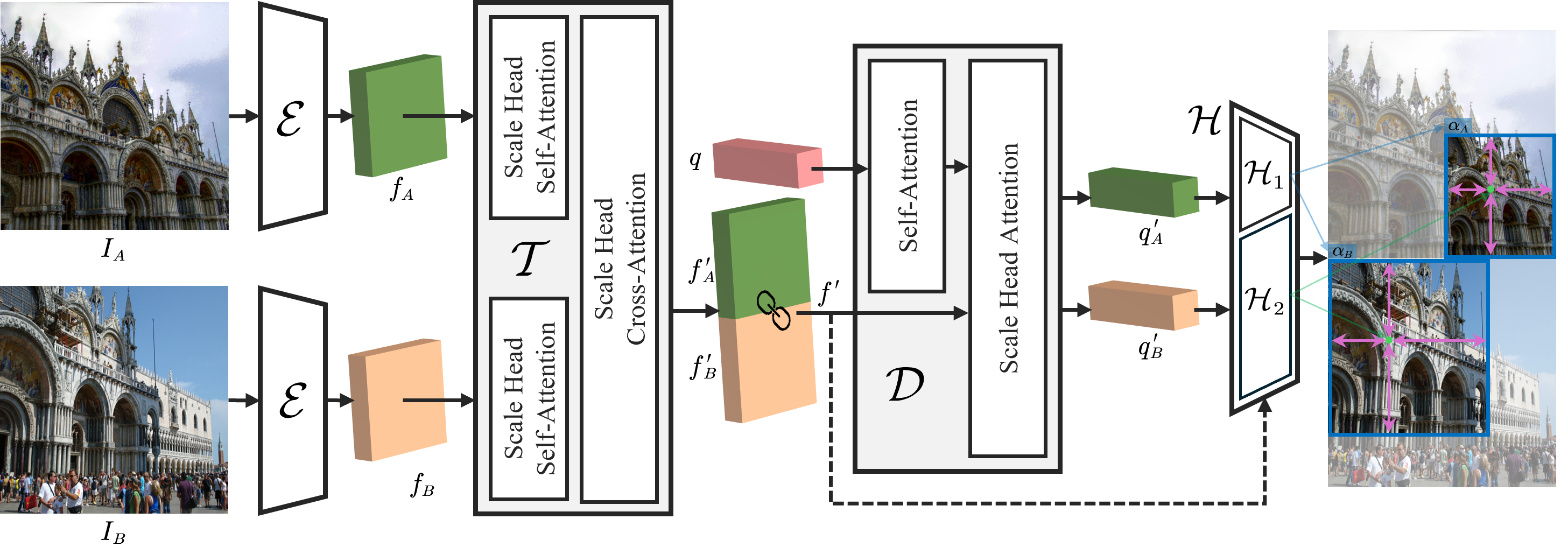

Matching images with significant scale differences remains a persistent challenge in photogrammetry and remote sensing. The scale discrepancy often degrades appearance consistency and introduces uncertainty in keypoint localization. While existing methods address scale variation through scale pyramids or scale-aware training, matching under significant scale differences remains an open challenge. To overcome this, we address the scale difference issue by detecting co-visible regions between image pairs and propose SCoDe (Scale-aware Co-visible region Detector), which both identifies co-visible regions and aligns their scales for highly robust, hierarchical point correspondence matching. Specifically, SCoDe employs a novel Scale Head Attention mechanism to map and correlate features across multiple scale subspaces, and uses a learnable query to aggregate scale-aware information of both images for co-visible region detection. In this way, correspondences can be established in a coarse-to-fine hierarchy, thereby mitigating semantic and localization uncertainties. Extensive experiments on three challenging datasets demonstrate that SCoDe outperforms state-of-the-art methods, improving the precision of a modern local feature matcher by 8.41%. Notably, SCoDe shows a clear advantage when handling images with drastic scale variations.

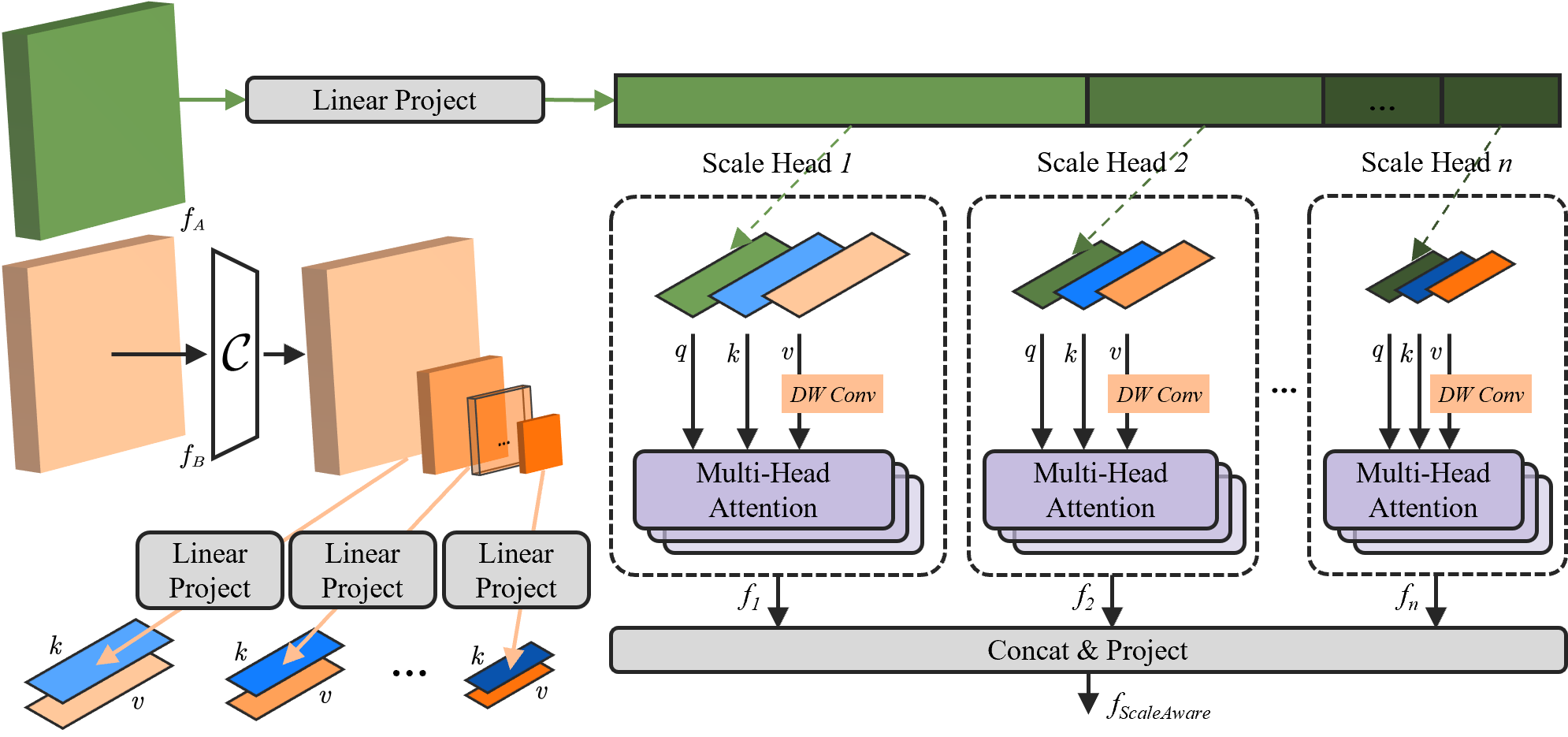

The Scale Head Cross-Attention mechanism. Features are projected into multiple subspaces of different scales through convolution groups.

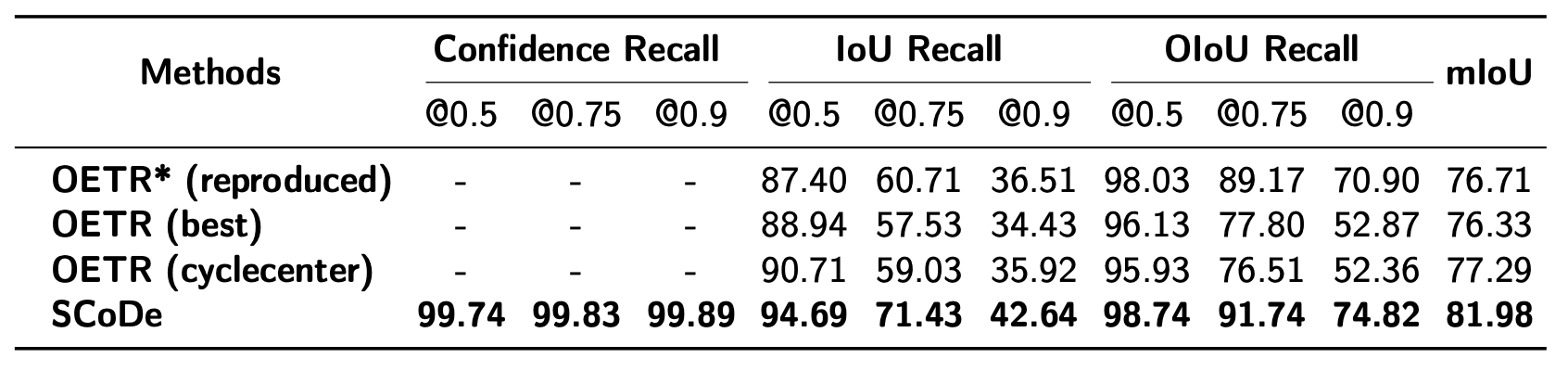

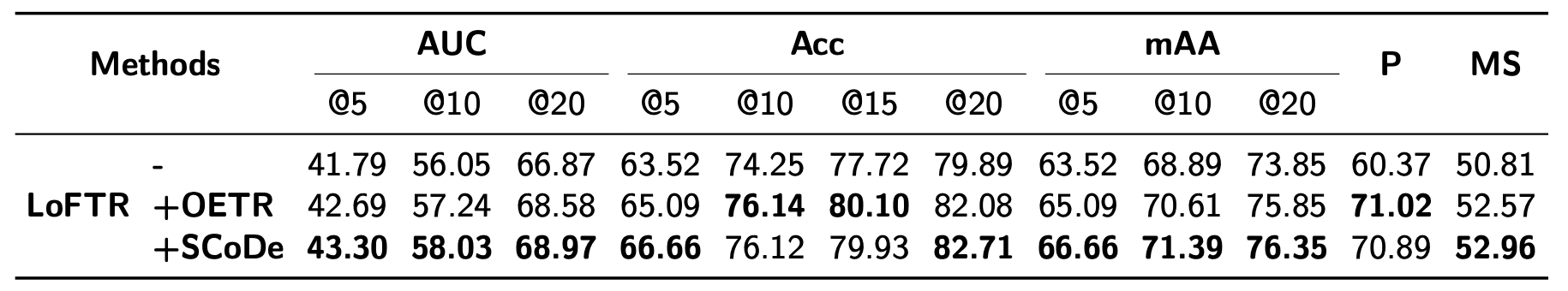

Quantitative evaluation on MegaDepth. We report recall at multiple IoU thresholds and mIoU metrics.

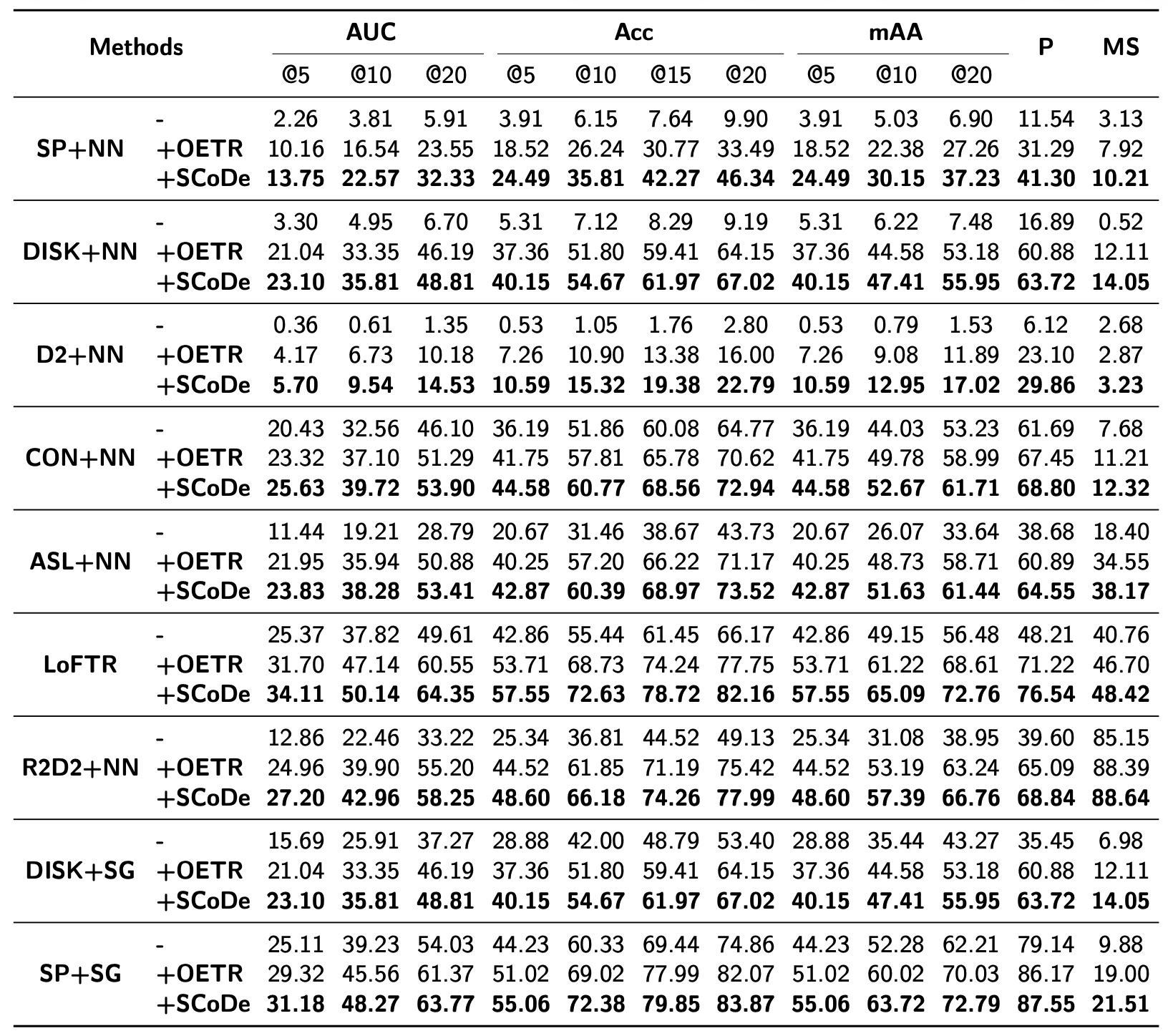

Evaluation on MegaDepth for all-range scale differences with pose estimation and matching metrics.

Evaluation for larger scale differences across multiple feature extractors and matchers.

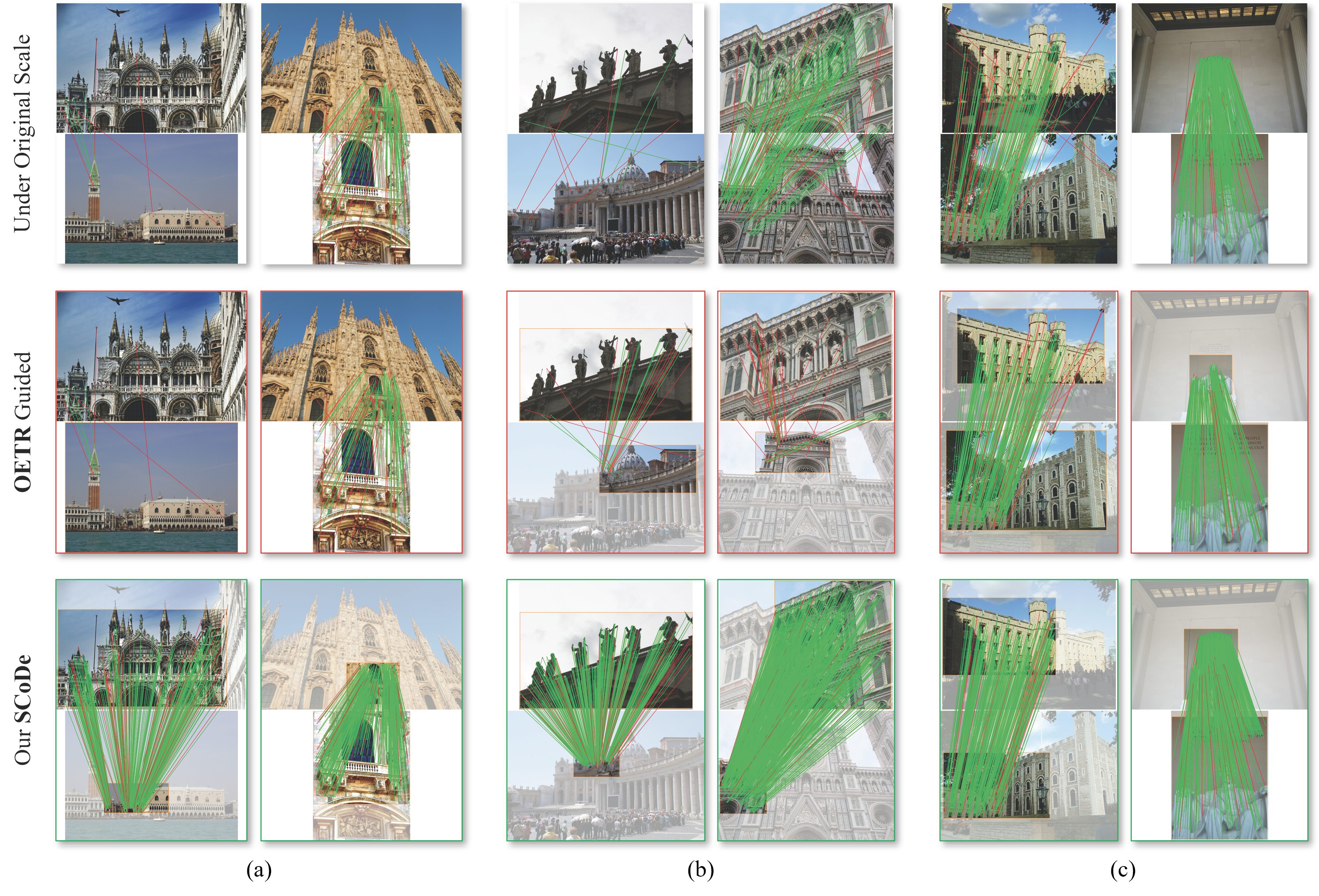

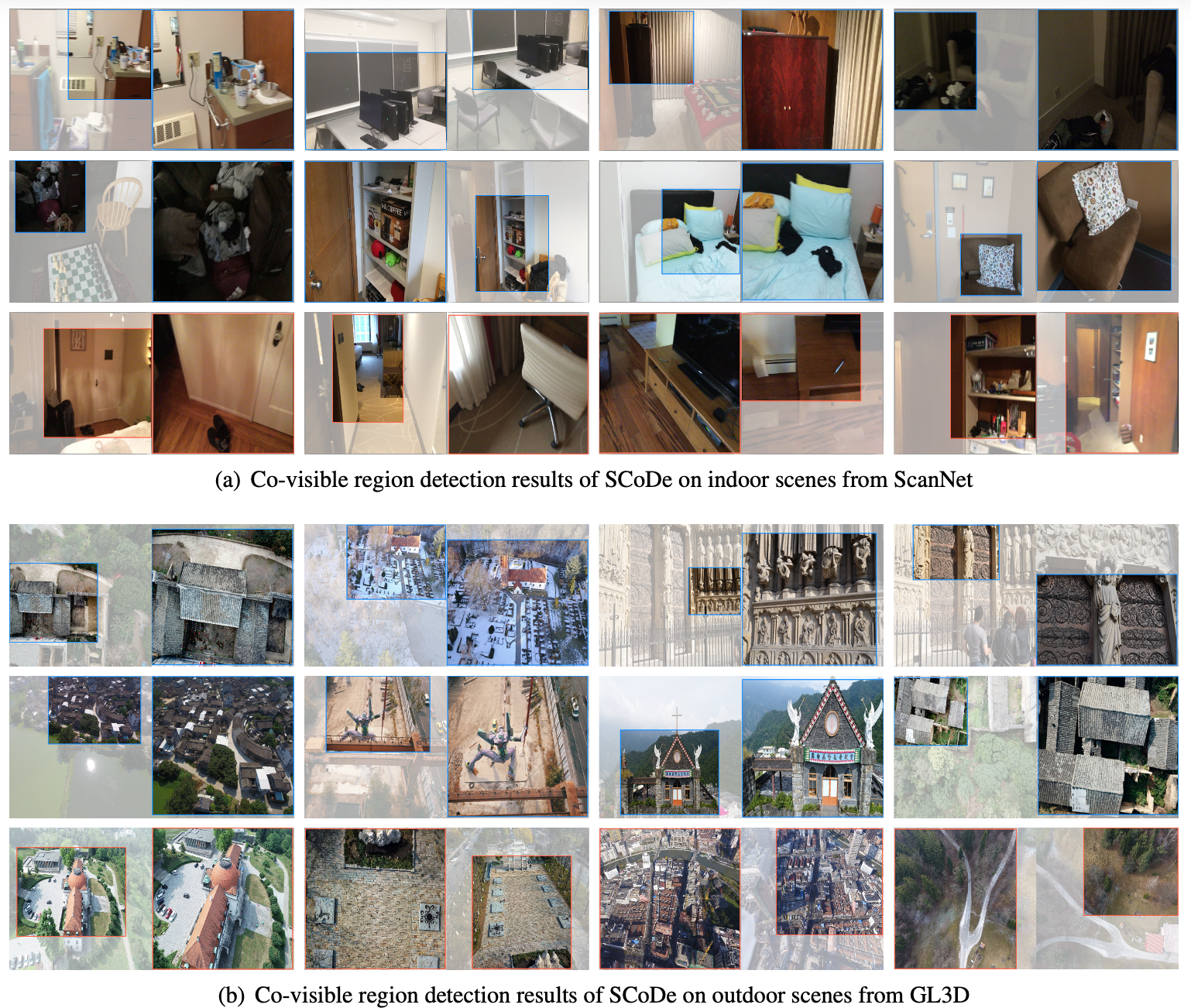

Qualitative examples showing detected co-visible regions in test scenes.

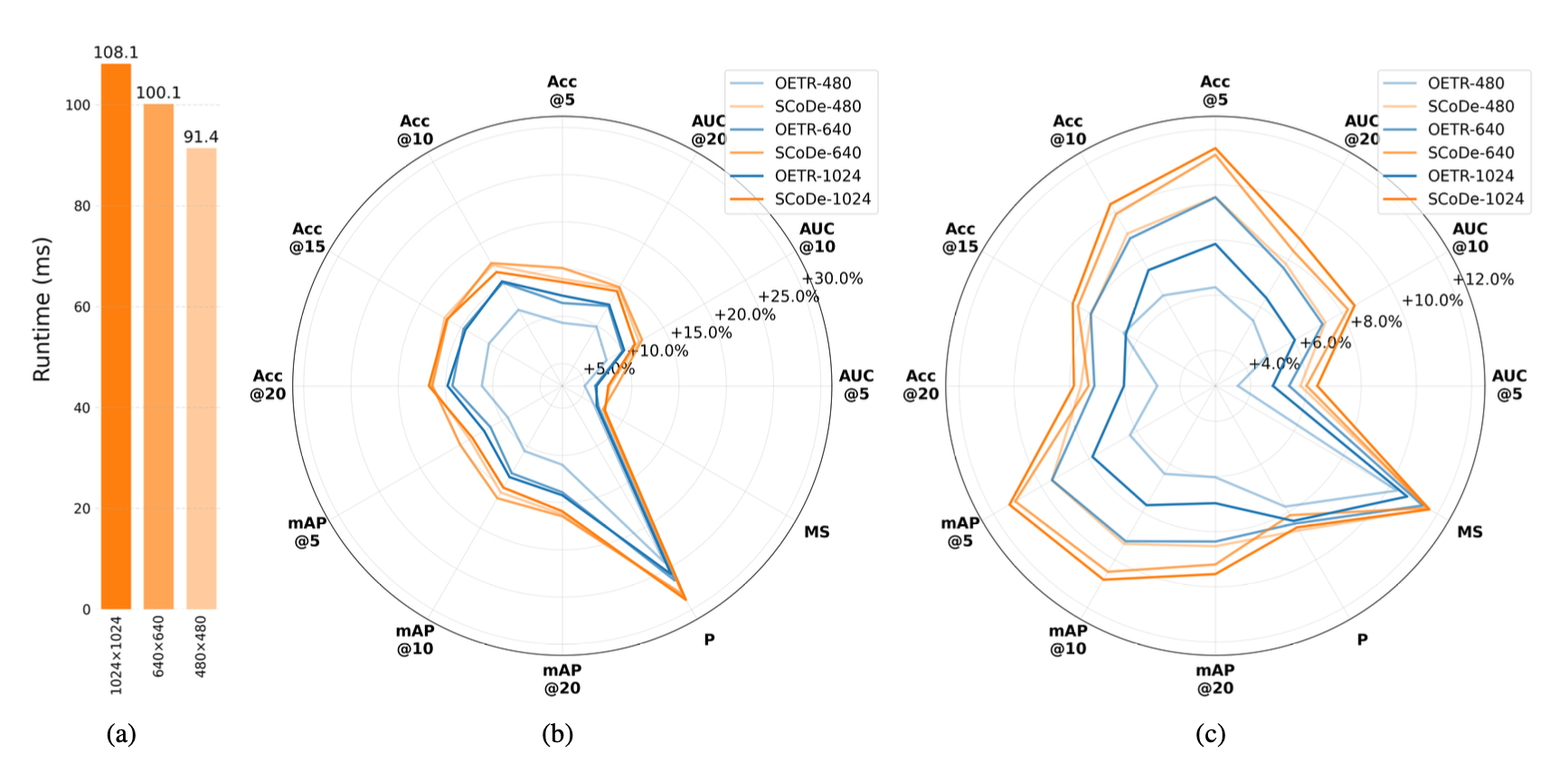

Performance and runtime analysis across different input resolutions.

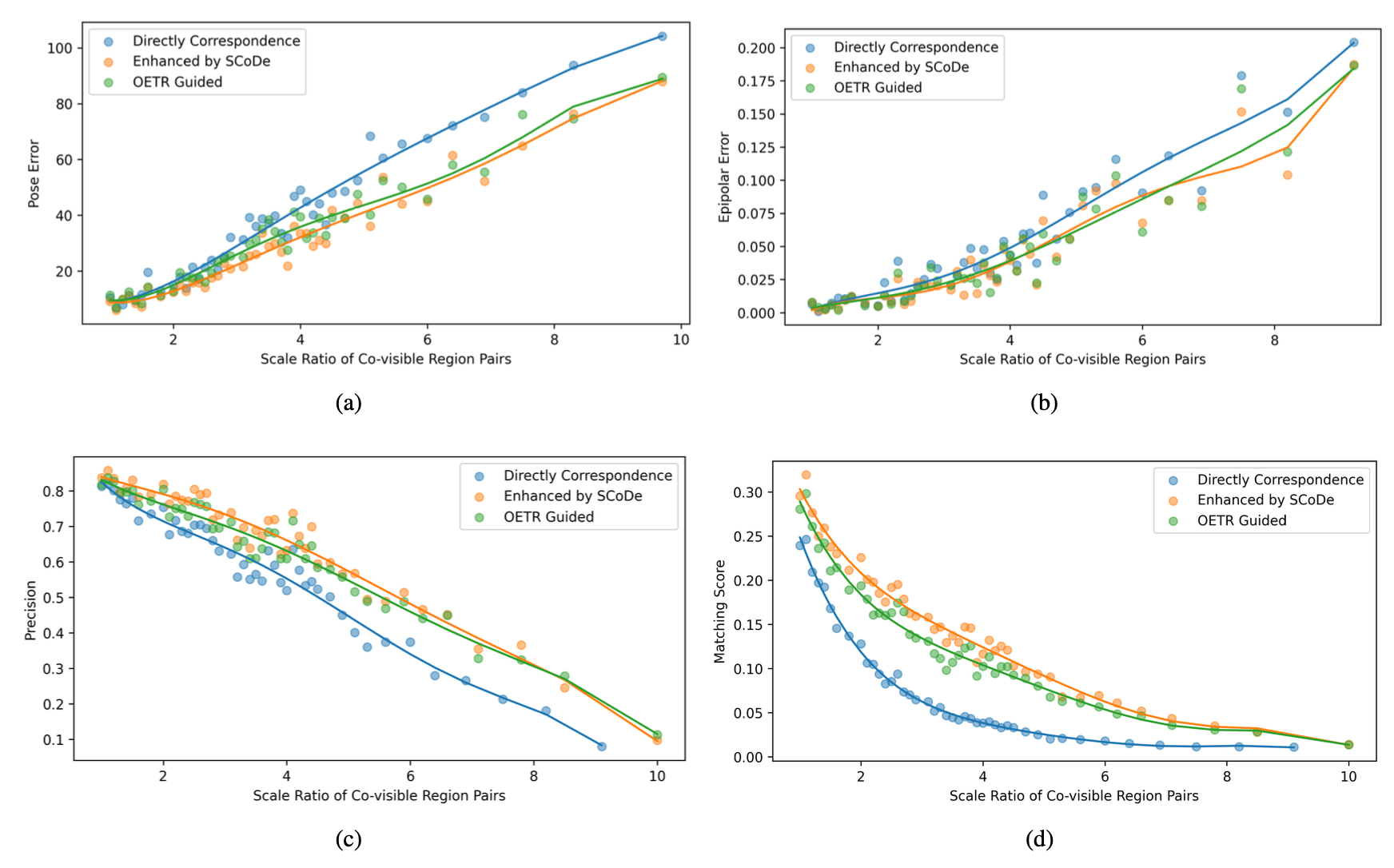

Relational trend of metrics at different scales showing improvement with SCoDe.

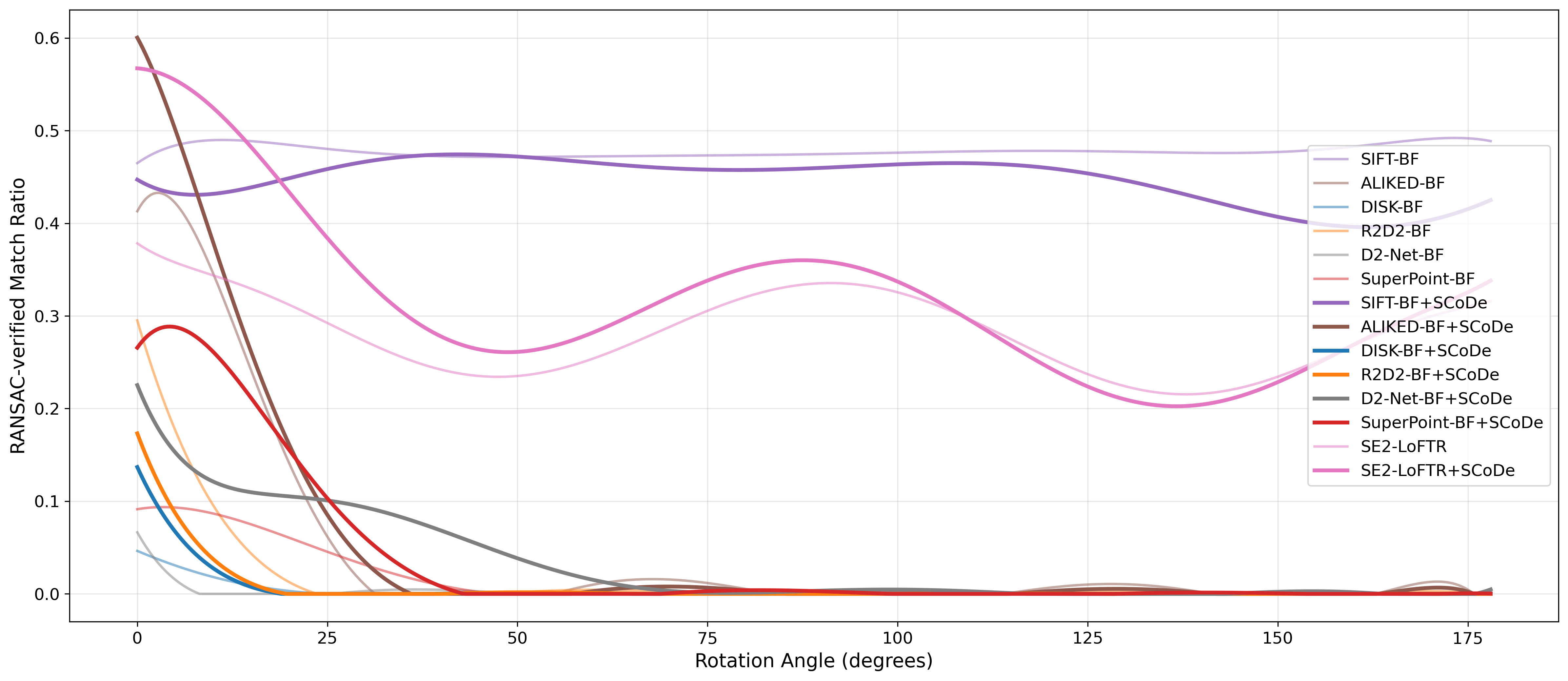

Rotation invariance with and without SCoDe region constraints.

Qualitative examples in indoor and outdoor scenes showing detection accuracy.

BibTeX

@article{pan2025scale,

title={Scale-aware co-visible region detection for image matching},

author={Pan, Xu and Xia, Zimin and Zheng, Xianwei},

journal={ISPRS Journal of Photogrammetry and Remote Sensing},

volume={229},

pages={122--137},

year={2025},

publisher={Elsevier}

}