Hello World!

To Shape the Future of Intelligence Beyond the Digital World.

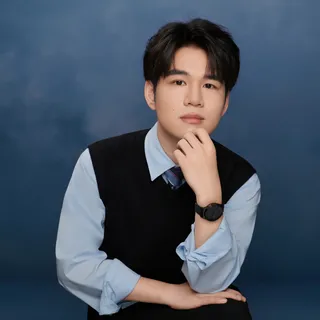

Hi, I am Xu Pan, an incoming Research Associate at the

Perception and Embodied Intelligence (PINE) Lab,

supervised by

Prof. Ziwei Wang,

within the

School of Electrical and Electronic Engineering (EEE),

Nanyang Technological University (NTU).

My research focuses on embodied intelligence,

with an emphasis on spatial representations that enable

generalizable perception-action coupling for embodied agents.

I study how structure-aware representations can support robust interaction, enabling agents to generalize across diverse environments, viewpoints, and embodiments, with a particular interest in agent-centric policy learning and spatial reasoning.

Previously, I received my B.Eng. and M.Sc. degrees from

Wuhan University,

where I worked at the

![]() State Key Laboratory of Information Engineering in Surveying, Mapping and Remote Sensing (LIESMARS).

I also interned at

State Key Laboratory of Information Engineering in Surveying, Mapping and Remote Sensing (LIESMARS).

I also interned at

Baidu,

and was a remote Research Assistant at the

Centre for Frontier AI Research (CFAR), Agency for Science, Technology and Research (A*STAR),

working with

Dr. Xingrui Yu.

More broadly, I aim to develop scalable and generalizable learning frameworks that advance spatial intelligence and enable embodied systems to operate reliably in complex real-world settings.

News

- May 2026 Successfully defended Master's thesis with all excellent ratings.

- May 2026 One paper accepted by the CVPR 2026 Workshop on 3D-LLM/VLA. [Paper]

- May 2026 Attended the 2026 Vision and Learning Seminar (VALSE) in Wuhan, China.

- Mar 2026

- Jan 2026

SHOW 7 MORE

- Nov 2025 Successfully defended my Master's thesis proposal.

- Aug 2025 The first paper accepted by the ISPRS Journal of Photogrammetry and Remote Sensing. [Paper]

- Aug 2025 Began a remote research internship at CFAR, A*STAR, supervised by Dr. Xingrui Yu and in collaboration with Zhenglin Wan.

- Jul 2025 Attended the 2025 Annual Academic Conference on Photogrammetry and Remote Sensing, CSGPC in Kunming, China.

- Dec 2024 Began a research internship at Baidu in Shenzhen, supervised by Dr. Yan Zhang, exploring frontier text-to-image and text-to-video generation.

- Jul 2024 Began collaboration on the SCoDe project under the guidance of Dr. Zimin Xia.

- Sep 2023 Enrolled in the Master's program at the State Key Lab. LIESMARS, Wuhan University, as a recommended exemption student, under the supervision of Prof. Xianwei Zheng.

Experiences

Nanyang Technological University (NTU)

Centre for Frontier AI Research (CFAR),

Centre for Frontier AI Research (CFAR),Institute of High Performance Computing (IHPC),

Agency for Science, Technology and Research (A*STAR)

Baidu International Technology (Shenzhen) Co., Ltd.

State Key Laboratory of Information Engineering in

State Key Laboratory of Information Engineering in Surveying, Mapping and Remote Sensing (LIESMARS)

Acknowledgements:

I’m grateful to my collaborators and mentors for their guidance and support, especially

Prof. Ziwei Wang, Dr. Xingrui Yu (A*STAR), Dr. Zimin Xia (EPFL), Dr. Yan Zhang (Baidu), Prof. Hanjiang Xiong (WHU),

and my colleagues/peers including

Zhenglin Wan (NUS), Jiashen Huang (NTU), Ziqong Lu (HKU), Qiyuan Ma (WHU), Jintao Zhang (WHU), Chenyu Zhao (WHU), He Chen (WHU),

and others I’ve had the pleasure to work with.

Selected Publications

VIEW ALLSA-VLA: Spatially-Aware Reinforcement Learning for Flow-Matching Vision-Language-Action Models

Xu Pan, Zhenglin Wan, Xingrui Yu*, Xianwei Zheng, Youkai Ke, Ming Sun, Rui Wang, Ziwei Wang, Ivor Tsang

Projects

SA-VLA

2026A research project on robust RL adaptation of flow-matching–based VLA models for robotic manipulation, focusing on generalization under distribution shifts in challenging benchmarks.

Co-visibility Guided Image Matching

2025A research project on robust image matching in robot vision, photogrammetry and remote sensing, using explicit co-visibility modeling to handle extreme scale and viewpoint variations.

GNDAS

2022The GNDASystem (Global Natural Disaster Assessment System) is a web-based geographic information system application designed for the analysis and assessment of natural disasters.

I2RSI

2022The I2RSI System (Intelligent Interpretation of Remote Sensing Images) is a web-based application for remote sensing image interpretation, powered by the Baidu PaddlePaddle deep learning framework.

Honors & Awards

- 2026.04

Outstanding Graduating Graduate Student

Wuhan University

- 2025.11

Outstanding Graduate Student

Wuhan University

- 2025.11

Graduate Academic Excellence Scholarship

Wuhan University

- 2024.11

Graduate Academic Excellence Scholarship

Wuhan University

- 2024.10

Outstanding Student Leader

Wuhan University

SHOW 10 MORE

- 2024.08

Outstanding Student Club Leader

Wuhan University

- 2023.05

Active Contributor to Social Activities

School of Remote Sensing and Information Engineering

- 2022.10

Wuhan University Class C Scholarship

Wuhan University

- 2022.08

National Second Prize

China Software Cup College Student Software Design Competition

- 2022.03

Honorable Mention

Mathematical Contest In Modeling

- 2021.12

Third Prize

Asia and Pacific Mathematical Contest in Modeling

- 2021.10

First Prize in Hubei Division

China Undergraduate Mathematical Contest in Modeling

- 2021.09

Outstanding Student

Wuhan University

- 2021.03

Bronze Medal

China Collegiate Algorithm Design & Programming Challenge Contest

- 2020.05

Second Prize in Final

Translation & Interpreting Contest of Hubei Province

Academic Service

| Reviewer | 10th Annual Conference on Robot Learning (CoRL'26) | 2026 | |

| Member | ISPRS Student Consortium (ISPRS SC) | 2024 | |

| Volunteer | 2023 International Graduate Workshop on Geo-Informatics (IGWG'23) | 2023 | |

| Volunteer | 2022 International Graduate Workshop on Geo-Informatics (IGWG'22) | 2022 |

Personal Philosophy

I follow Stoic philosophy. Life is a joyful ascent: a true mountaineer delights in the climb itself, not just the summit.

Thou sufferest this justly: for thou choosest rather to become good to-morrow than to be good to-day.

I also resonate with the spirit of Slow Science.

We live in an age tyrannized by efficiency, outcomes, and speed, to the point that nothing lasts and nothing leaves a deep impression. In the midst of noisy bubbles and short-lived hype, I hope to take time to think carefully, to doubt, to refine, and to do research that is genuinely meaningful and worth remembering.